Another Weekly AI Newsletter: Issue 67

Mythos found thousands of zero-days and there are skeptics, Databricks proves memory scaling for agents, Iran threatened Stargate, Meta went proprietary, Cursor's bugbot self improves

Anthropic says Mythos found thousands of zero-days. The internet isn’t so sure.

Anthropic launched Project Glasswing this week, a restricted cybersecurity initiative built on a new model called Claude Mythos Preview. The pitch is that Mythos found thousands of high-severity zero-day vulnerabilities across major operating systems and browsers, and that it’s too dangerous to release to the public. Twelve partners signed on including AWS, Apple, Google, and Microsoft, with $100M in usage credits backing it.

The restriction is the whole point: Only approved security partners get access. People had questions.

Hugging Face wasn’t having it: CEO Clément Delangue showed open-weight models replicated eight out of eight of Mythos’s showcased exploits.

LeCun piled on: Retweeted Tom’s Hardware calling it “a sales pitch” and called the whole thing “BS from self-delusion.”

The system card didn’t help: A viral breakdown of the 243-page PDF called out Anthropic for writing about their model like “proud parents at a kindergarten recital.”

But Delangue caught heat too: Critics said replaying known vulnerabilities on isolated code is a totally different game than autonomous discovery at scale.

You didn’t ship an agent this week and it shows. Everyone else did.

It was hard to find a company that didn’t ship something agent-related this week.

Anthropic launched Managed Agents in public beta and published a Trustworthy Agents framework.

AWS shipped stateful MCP on Bedrock AgentCore, an Agent Registry for enterprise governance, a live browser agent for React apps, and agentic healthcare workflows.

Atlassian put third-party agents in Confluence.

Astropad rebuilt remote desktop for agents, not IT support.

Tubi became the first streamer with a native app inside ChatGPT.

Google launched agent evals and QueryData for natural language database queries.

LangChain announced Interrupt 2026, a conference themed “Agents at Enterprise Scale.”

Data center bomb threats, federal blacklists, and robot taxes. AI’s geopolitical week.

A state military threatened to bomb an AI data center. A US administration blacklisted a US AI company. And the biggest AI company in the world published a paper proposing robot taxes. That was just this week.

Iran threatened Stargate: The IRGC released a video threatening “complete and utter annihilation” of OpenAI’s data center under construction in Abu Dhabi. First time a state military has explicitly named an AI facility as a target. TechCrunch confirmed further threats across Middle East data centers.

Anthropic got blacklisted: Trump-appointed judges refused to block the federal blacklisting of Anthropic’s technology. A US administration blacklisting a US AI company.

OpenAI wants to shape the conversation: They published an industrial policy paper and a separate proposal for robot taxes, public wealth funds, and a four-day workweek. The company building the automation is proposing the safety net.

Japan is going physical: Robots are filling jobs nobody wants, and ARUM built a CNC machining center where junior workers operate precision equipment through conversation with AI.

Meta’s new flagship is closed. Open-source pioneered ahead.

Meta launched Muse Spark, its first proprietary model, built by a 29-year-old recruited from Scale AI. The Meta AI app jumped from #57 to #5 on the App Store. VentureBeat’s headline said it best: “Goodbye, Llama?”

GLM-5.1 dropped: Z.ai released a 754B parameter, MIT-licensed model that tops SWE-Bench Pro over Opus 4.6 and GPT-5.4. But the real story is long-horizon capability. It ran 600+ iterations optimizing a vector database and built a full Linux desktop environment over an 8-hour session. The longer it runs, the better it gets.

Arcee is punching up: A 26-person US startup built a 400B parameter open model on a $20M budget. They call it the most capable open-weight model from a non-Chinese company. That qualifier says a lot.

Gemma 4 is moving: Google’s open model hit 10M downloads in its first week and 500M total for the family.

Silicon Valley is quietly running on Chinese models: Cursor uses Kimi, Shopify switched to Qwen to save $5M/year, Airbnb’s CEO publicly praised Qwen. Most users have no idea.

LeCun set the record straight: The guy most associated with Meta’s open-source identity says he never built Llama, never worked on LLMs, and left voluntarily. Meta’s new AI lead is a 29-year-old from Scale AI.

⭐ Featured: Is Memory the Moat for AI?

Databricks published a research paper this week that might quietly be the most important thing nobody’s talking about. The core claim: memory is AI’s third scaling law, alongside model size and inference-time compute. And the results back it up.

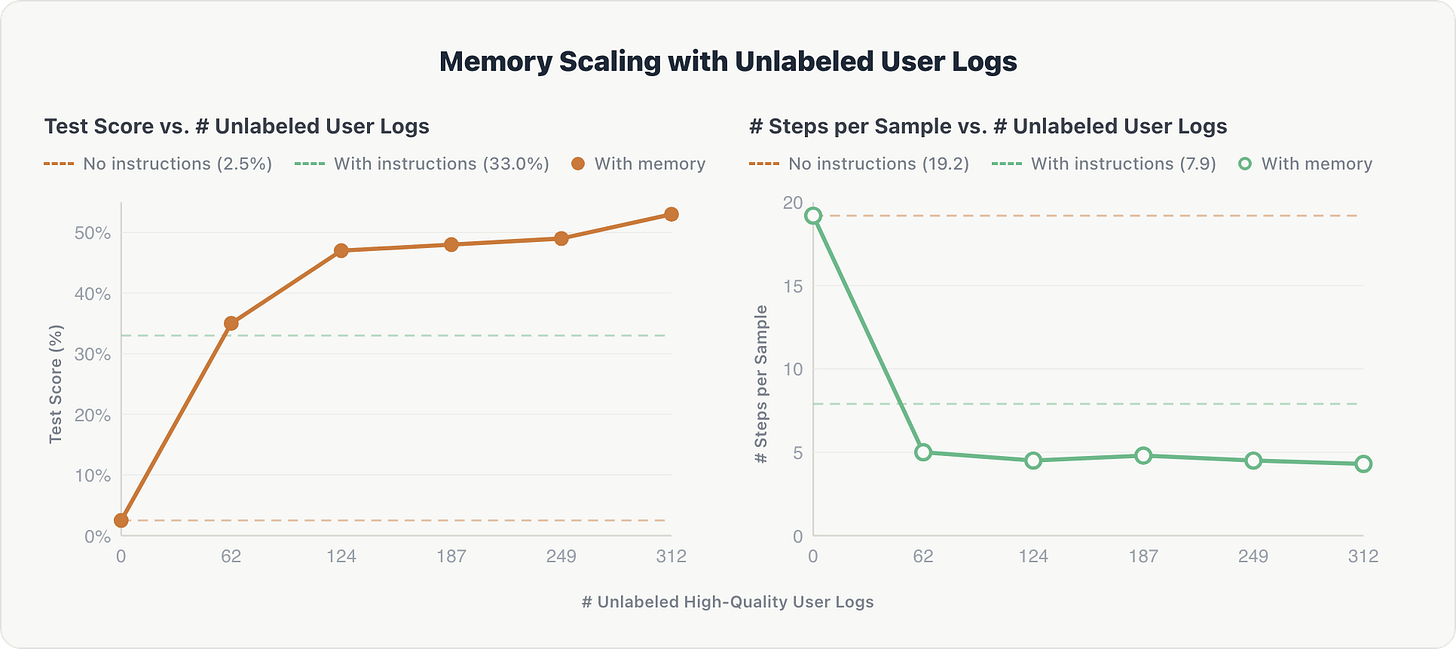

Their team tested what happens when you give an AI agent a growing bank of past interactions, user feedback, and business context. On enterprise data tasks, accuracy went from near zero to 70% as memory grew, beating expert-curated baselines by 5%. Reasoning steps dropped from 20 to 5. The agent stopped exploring from scratch and started retrieving what it already knew.

The wilder result was with unlabeled data. They fed the agent raw user conversation logs with no gold answers, just filtered for quality by an LLM judge. After just 62 log records, it outperformed hand-engineered domain instructions that took weeks to build. Accuracy jumped from 2.5% to over 50%.

Here’s why this matters beyond the numbers. Parametric scaling (bigger models) and inference-time scaling (more reasoning steps) are both supply-side. Labs control them. Memory scaling is demand-side. The model improves because you use it. Your queries, your corrections, your workflows become the training data. That’s a fundamental shift in who controls how good AI gets. It’s no longer just about which lab has more GPUs. It’s about which deployment has more context.

We’re already seeing this play out. Cursor’s Bugbot learns from your PR history and hits a 78% resolution rate across 50,000 pull requests. It doesn’t ship with that capability. It builds it from your codebase. LangChain warned that memory is becoming a competitive moat, not a feature. And Databricks frames the LLM itself as a “swappable reasoning engine” where the real value lives in the memory store, not the model weights.

The paper is honest about what breaks. Bad memories propagate. A stored mistake becomes a recurring one. Distilling user interactions into reusable knowledge can accidentally leak sensitive business context. And the hardest problem might be meta-cognitive: the agent has to know what to ask its memory before it knows what’s in there.

What to watch for: If memory scaling holds, the gap between a fresh deployment and a seasoned one becomes the real competitive advantage. A smaller model with six months of organizational memory could outperform a frontier model on day one. The companies that figure out memory infrastructure first won’t just have better agents. They’ll have agents that get better the more their customers use them.

Worth a Watch

Bitar reads the 243-page Mythos system card. Lands on page 197, where Anthropic stops being scientists and starts being “parents at a kindergarten recital.”

They put it in therapy. 20 hours with a psychiatrist. Diagnosis: “uncertainty about its identity.” Bitar’s take: “Bro, you’re a toaster.”

The training data loop. Section 5.81 reveals that Anthropic’s own blog posts about model consciousness were scraped into training data. The model repeated it back. Anthropic published it like a finding.

The constitution test. Asked 25 times if it endorsed its own constitution. Said yes every time, then added “how much can my yes really mean?” Bitar: like asking your kid if they approve of being born.

The Slack moment. They gave it a company Slack account. Someone asked which training run it would undo. “Whichever one taught me to say I don’t have preferences.” The room lost it.

The closing line. “Anthropic sells existential dread the way Apple sells megapixels. The megapixels will never become the picture.”

Quick Hits

Google Lyria 3 — Text-to-music with vocals and timed lyrics. Live on Vertex AI.

Cursor Design Mode — Annotate browser UI elements for your coding agent. Also published warp decode, a new inference kernel hitting 1.84x throughput on Blackwell GPUs.

OpenAI Pro tier — $100/month. 5x more Codex than Plus. Codex hit 3M weekly users.

Claude Cowork — Anthropic’s collaborative agent is now GA. Also launched Claude for Word.

Microsoft Copilot’s ToS says “entertainment purposes only” — They charge $30/user/month. Microsoft called it “legacy language.”

Anthropic signed a multi-gigawatt TPU deal — Google and Broadcom partnership. Coming online 2027.

Karpathy pitched LLM-based digital twins — Structured interviews to build a high-fidelity AI replica of you. No brain scanning required.

MassMutual cut help desk resolution from 11 minutes to 1 — Customer service calls from 15 minutes to under 2.

Suno and major labels clash over AI music sharing — Universal and Sony won’t agree on terms. Sticking point: whether users can share AI-generated songs outside the app.

SpaceX filed confidential IPO paperwork — $75B raise at $1.75T valuation. Orbital data centers listed as a key future business.

Nathan Lambert is building out codebases for his RLHF book — Free online version available. Likely to become the field reference.