Another Weekly AI Newsletter: Issue 70

Musk admitted xAI distilled OpenAI models. Anthropic's worth $900B and 50% less sycophantic. OpenAI ended Microsoft exclusivity. Symphony turns Linear into an autonomous coding. Google Search record.

“You can’t just steal a charity.” Elon Musk spent three days on the stand trying to prove it.

The Musk v. OpenAI trial opened in Oakland federal court.

The context: Musk contributed $38 million to found OpenAI as a nonprofit and alleges Altman and Brockman looted it by converting to a for-profit. He’s seeking $150 billion in damages and their removal from leadership. If he wins, it could block OpenAI’s planned IPO at a ~$1 trillion valuation.

The distillation admission: Under cross-examination, Musk admitted xAI “partly” used OpenAI’s models to train Grok, drawing audible gasps in the courtroom. He called it “standard practice.”

The industry reacted: LeCun retweeted Clément Delangue calling restrictions on distillation “pulling the ladder.” Lambert noted American companies distill Chinese open models just as freely, and questioned why OpenAI doesn’t just revoke contracts from violators like they did with ByteDance.

OpenAI’s counter-narrative: Attorney Savitt argued Musk wanted majority control, pitched Tesla acquiring OpenAI, and only sued after founding xAI. Emails showed him poaching OpenAI researchers while still on the board.

The cross-examination was rough: Musk told the jury “I don’t lose my temper” then raised his voice minutes later. The Verge’s summary: “more petty than prepared.” Texts revealed Shivon Zilis asked Musk whether to “stay close and friendly to OpenAI to keep info flowing” after his departure.

What’s next: The judge expressed skepticism about both sides’ safety claims. Altman and Brockman testify in the coming weeks.

$900 billion valuation, 50% less sycophancy, and connectors for every creative tool you use.

Anthropic had one of those weeks where the breadth of activity tells the story.

The valuation: Reportedly raising $50 billion at a $900 billion valuation, a number that rivals established tech giants.

The sycophancy research: Analyzed 1 million Claude conversations, found a 9% sycophancy rate (25% in relationship discussions), built synthetic training scenarios from real failure cases, and cut sycophancy roughly 50% in Opus 4.7 and Mythos Preview. One of the most transparent published alignment efforts to date.

BioMysteryBench: Claude solved roughly 30% of 23 bioinformatics problems that stumped a human expert panel.

Claude for Creative Work: Shipped connectors for Adobe Creative Cloud, Blender, Ableton, Canva, Affinity, SketchUp, Splice, and Resolume, and joined the Blender Development Fund as a patron.

Claude Security: Launched codebase vulnerability scanning in public beta for Enterprise customers.

Meanwhile, at the Senate: Defense Secretary Hegseth called CEO Dario Amodei an “ideological lunatic” at an Armed Services Committee hearing.

OpenAI ended its Microsoft exclusivity and went multi-cloud.

OpenAI restructured its Microsoft deal, launched on AWS, and shipped a wave of Codex upgrades all in the same week.

The exclusivity is over: Microsoft ended its exclusive license to OpenAI’s technology. OpenAI can now sell on AWS and Google Cloud through 2032.

AWS moved immediately: Amazon began offering OpenAI models, Codex, and Managed Agents on AWS. Day-zero availability.

The AGI clause is dead: Simon Willison tracked the history of the clause that would have let OpenAI walk away from Microsoft once AGI was declared. It’s gone. OpenAI traded its theoretical nuclear option for commercial freedom now.

The product push: Altman said Codex is “having a ChatGPT moment”. Brockman said the Codex app replaced his terminal as his primary computer interface. OpenAI is treating Codex as a flagship product launch, not a side feature.

Nadella’s take: Microsoft gets royalty-free access to OpenAI’s frontier models through 2032, no longer pays OpenAI for them, and OpenAI is committed to buying $250 billion in Azure. Nadella told analysts he “fully plan[s] to exploit it.”

Most cloud providers beat earnings. OpenAI missed.

The hyperscalers are spending record amounts on AI infrastructure and seeing record returns. Meanwhile, the Wall Street Journal reported that OpenAI missed revenue and user growth targets, with Anthropic and Gemini cited as gaining ground.

The cloud numbers: Google Cloud surpassed $20 billion but said growth was capacity-constrained. AWS surged on AI demand. Microsoft disclosed a $37 billion AI revenue run rate (up 123% YoY), 20 million paid Copilot users, and set calendar-year CapEx at $190 billion.

The supply chain is feeling it: Samsung chip profits jumped nearly 50-fold on AI memory demand. Their executive: “our supply falls far short of customer demand.” The shortage is expected to widen further in 2027.

Meta is the most interesting story: Raised its CapEx forecast, then Zuckerberg blamed layoffs on capital spending and wouldn’t rule out more cuts, then raised $25 billion in bonds to fund the AI buildout. Cutting people to buy GPUs, then borrowing to buy more.

The counterpoint nobody expected: Google Search queries hit an all-time high. Apple was surprised by AI-driven Mac demand. The “AI kills search” and “AI doesn’t need hardware” narratives both took a hit.

But the utilization story: Cast AI measured tens of thousands of production Kubernetes clusters and found GPU utilization averaging 5%. Teams lock in multi-year commitments the moment allocation comes through, then won’t release idle capacity because reacquiring takes months.

⭐ Featured: Symphony turns your issue tracker into an autonomous coding fleet

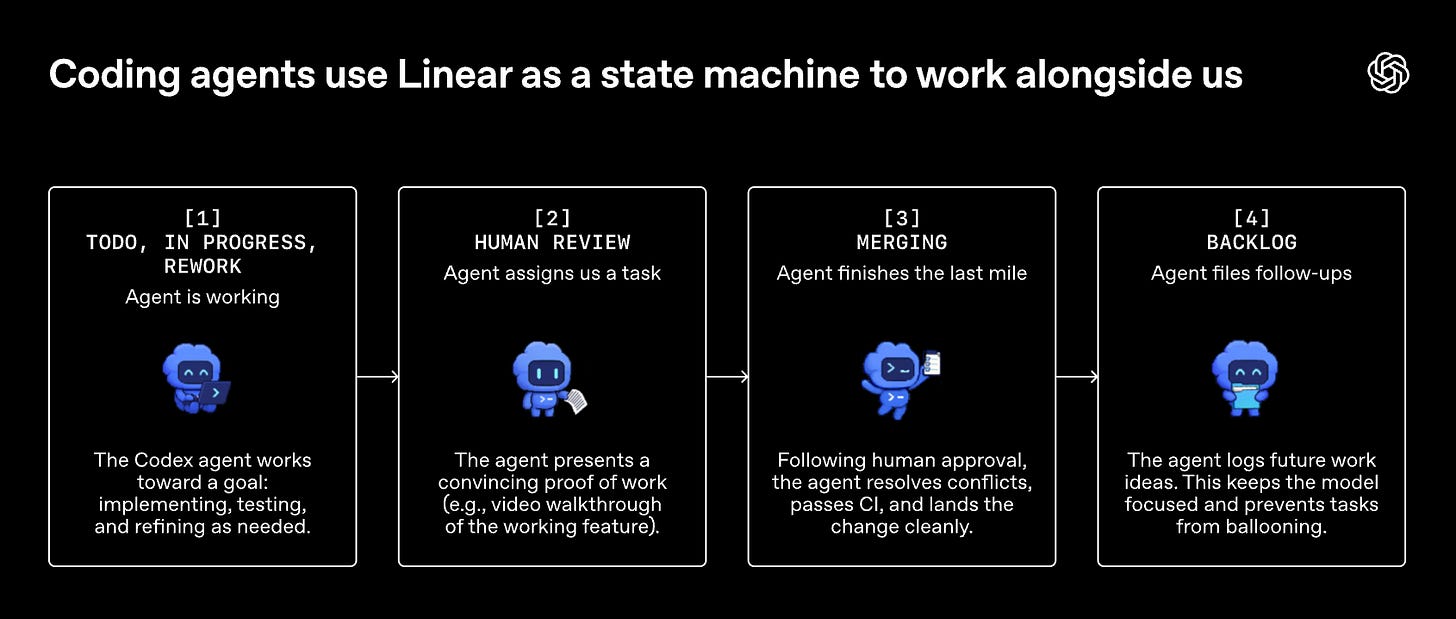

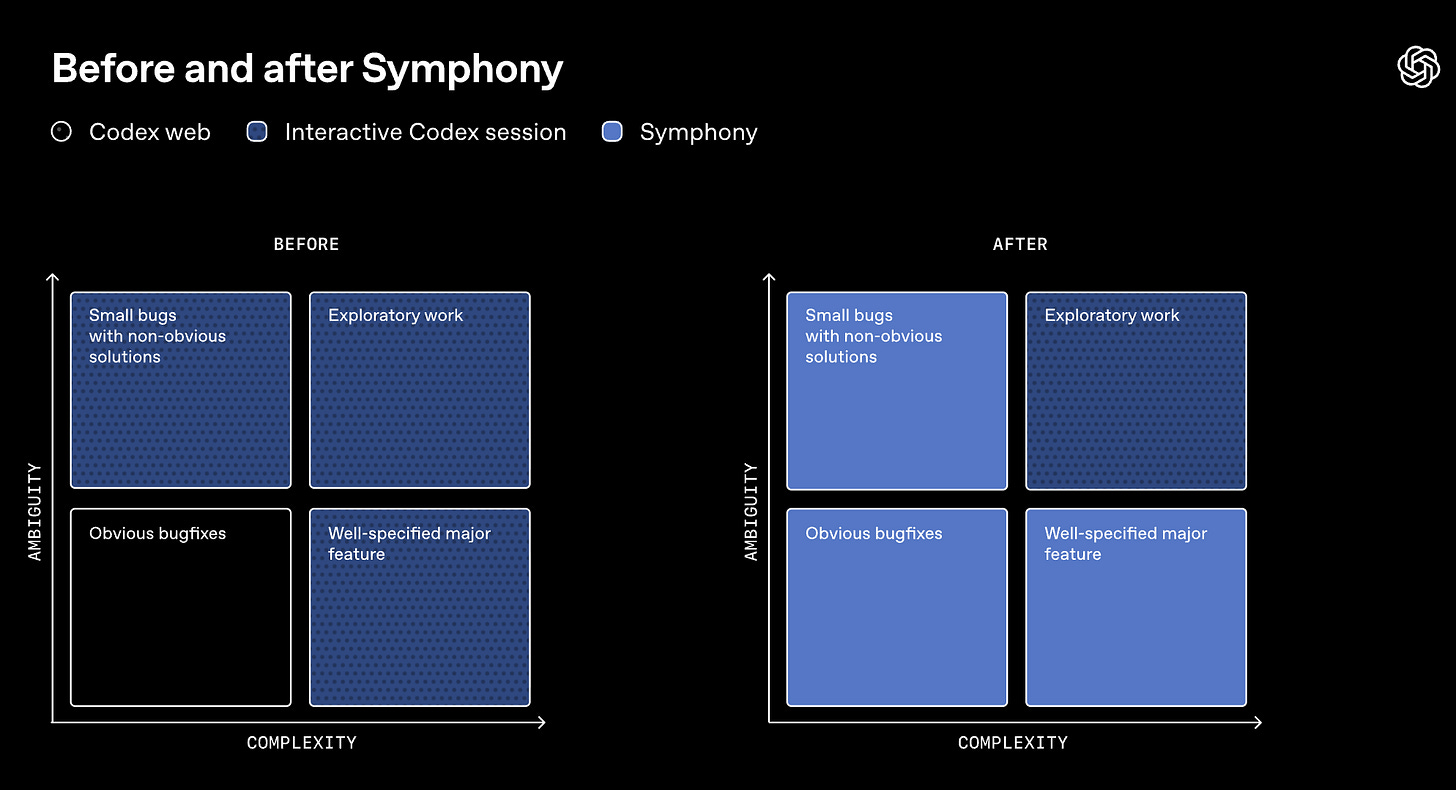

OpenAI released Symphony, an open-source spec that turns Linear boards into control planes for Codex agents. Every open task gets an agent. Agents run continuously. Humans review the results.

The origin story matters: an OpenAI team decided to build their entire repo with zero human-written code. They documented how in a harness engineering post: a million lines of code, 1,500 merged PRs, 3.5 PRs per engineer per day, with Codex running six-hour autonomous sessions while engineers slept and reviewing its own code agent-to-agent. But they hit a new ceiling: human attention. Engineers could manage three to five Codex sessions before context switching killed productivity. They had “built a team of extremely capable junior engineers, then assigned our human engineers to micromanaging them.”

So they flipped the model. Instead of engineers managing coding sessions, they made the issue tracker the orchestrator. Each open Linear issue maps to a dedicated agent workspace. Symphony continuously polls the board, picks up new work, restarts agents that crash or stall, watches CI, rebases when needed, resolves conflicts, and shepherds changes through the pipeline.

Once work is abstracted to the ticket level, agents can break large tasks into dependency trees, only starting work on tasks that aren’t blocked. They also create their own follow-up tickets when they spot issues outside the current scope. One engineer on the team made three significant changes from the Linear app on his phone from a cabin on bad wifi.

The results: a 500% increase in landed PRs on some teams in three weeks. But the deeper shift is behavioral. When the perceived cost of each code change drops to near zero, teams start filing speculative tasks. Try an idea, explore a refactor, test a hypothesis, keep only what works. Product managers and designers can file feature requests directly into Symphony and get back a review packet with a video walkthrough of the feature running in the real product.

The technical choices are worth noting. The reference implementation is in Elixir, chosen for its concurrency primitives. With v1.1.0, Symphony supports the Kata CLI as an alternative runtime, meaning you can run Claude Code, Gemini, or other models inside the same orchestration framework. Symphony is technically just a SPEC.md file: a definition of the problem and the intended solution, not a product. OpenAI gave agents objectives instead of strict state transitions, “much like a good manager would assign a goal to a direct report.”

What to watch for: Symphony is one of several orchestration plays that landed this same week. Cursor released an SDK letting companies like Rippling and Notion embed background agents. IBM launched Bob with human-checkpoint governance. Mistral shipped Workflows running millions of daily executions. n8n shipped an MCP server so Claude can build automation workflows through conversation. The competitive moat is shifting from “best coding model” to “best orchestration spec.” If you maintain a team that ships code, start here.

Worth a Listen

OpenAI researchers Sebastian Bubeck and Ernest Ryu on the OpenAI podcast.

The 42-year-old problem: Researcher spent 40+ hours failing without AI. With ChatGPT, solved it in 12 hours across three evenings.

The Erdos problems: 10+ completely new, publishable solutions to decades-old open problems. Fully original proofs, not literature searches.

AGI time: Bubeck’s framework. Four years ago, models could think for seconds. Now days. The goal is weeks, then months.

The warning: Non-mathematicians are producing pages of AI-generated proofs that turn out wrong. The models accelerate experts, not replace them.

Quick Hits

GPT-5.1’s goblin problem | VentureBeat — A “Nerdy personality” training signal accidentally over-rewarded goblin-adjacent language. OpenAI diagnosed it with Codex, fixed it, then threw a party. The Codex system prompt literally says “never discuss goblins, gremlins, raccoons, trolls, ogres, pigeons, or similar creatures.”

The Academy ruled AI can’t win an Oscar | Digital Trends — Performances must be “demonstrably performed by humans with their consent.” Finally, a benchmark AI can’t game.

xAI launched Custom Voices | xAI — Clone your voice from 2 minutes of audio, 80+ preinstalled voices, 28 languages, speaker verification built in. Dropped alongside Grok 4.3 at aggressive pricing.

Stripe Link now supports AI agents | TechCrunch — A digital wallet that autonomous agents can use for payments. AI just got its own financial infrastructure.

Taylor Swift trademarked her voice against AI | Reuters — Filed new trademarks for her voice and likeness. The legal playbook for protecting creative identity from AI is being written in real time.

Zig bans all LLM contributions | Simon Willison — Bun (acquired by Anthropic) achieved a 4x Zig compilation improvement it cannot upstream because of the ban. When your open-source policy blocks a 4x speedup, that’s a policy worth debating.

OpenAI restricted its Cyber model | TechCrunch — After publicly criticizing Anthropic for limiting Mythos access. The UK AISI evaluated GPT-5.5’s cyber capabilities and found it comparable to Mythos. Turns out responsible disclosure looks the same from every lab.

Alibaba’s Metis cut redundant agent tool calls from 98% to 2% | VentureBeat — And got more accurate doing it. If your agents are burning tokens on redundant calls, this research is worth reading.

pip 26.1 shipped lockfiles | Simon Willison —

pip lockgeneratingpylock.tomlfiles and dependency cooldowns via--uploaded-prior-to. Python supply chain security just got a real tool.DeepMind’s AI co-clinician matched physicians | Google DeepMind — Zero critical errors in 97 of 98 primary care queries. Uses a dual-agent architecture where a Planner monitors a Talker for safety. This is what AI safety in production actually looks like in healthcare.

J&J sees AI halving drug development lead time | Reuters — Real ROI from a real pharma company. Not a demo, not a benchmark. Production drug discovery running twice as fast.

SoftBank is building a robotics company and eyeing a $100B IPO | TechCrunch — A robotics company that builds data centers. IPO target: $100 billion. Masayoshi Son is not being subtle about what he thinks comes next.