Another Weekly AI Newsletter: Issue 72

Anthropic ships five verticals and gave every plan an SDK budget. OpenAI launches a deployment company with 150 engineers. Cisco, GitLab, and GM cut thousands at record revenue. Grok Build at $299/mo.

Anthropic shipped into legal, small business, healthcare, and AWS in one week.

Claude for the legal industry launched with 12 practice-area plugins. Contract review, M&A diligence, and regulatory compliance out of the box. 87% of general counsel now use generative AI, up from 44% the prior year.

Claude for Small Business connected to QuickBooks, PayPal, and HubSpot. 15 ready-to-run workflows covering invoicing, CRM, document signing via DocuSign and Canva.

Anthropic committed $200M to the Gates Foundation. Grants, Claude credits, and technical support for vaccine screening, disease forecasting, K-12 education, and agricultural tools.

Claude Platform went GA on AWS. First cloud provider to offer Anthropic’s native platform with unified billing and same-day feature parity with the native API.

Every subscriber now gets separate Agent SDK credits. Pro gets $20/month, Max gets up to $200. Unlike OpenAI, which bundles Codex and third-party usage into normal plan limits, Anthropic is subsidizing the developer ecosystem with a separate bucket.

Claude Code limits increased another 50% through July. On top of the doubling from the week before.

Ramp and Axios independently confirmed Anthropic overtook OpenAI in workplace adoption. Though VentureBeat identified three structural threats to that lead.

The thread: Anthropic is trying to become the default for every vertical at once. Legal, healthcare, small business, enterprise, developer tooling. Whether that’s a platform strategy or overextension depends on execution.

OpenAI launched a deployment company and put Codex on your phone.

The OpenAI Deployment Company launched with 150 engineers on day one. 19 investment firms and consultancies, majority-owned by OpenAI, with Tomoro acquired to provide Forward Deployed Engineers. Valued at $14B.

ChatGPT connected to bank accounts. Plaid integration for Pro users in the US, with an Intuit partnership for actionable financial steps.

Codex shipped to iOS and Android. Mobile preview lets users start, review, and approve coding tasks while agents run on a separate device.

OpenAI disclosed a supply chain compromise. A TanStack npm package attack exposed code-signing certificates for macOS, Windows, iOS, and Android apps. Full certificate rotation required.

The thread: Both OpenAI and Anthropic launched enterprise services arms within a week of each other. The model API is becoming a commodity. The margin is shifting to who can get it deployed inside your organization first.

Companies are cutting workers at record revenue to fund AI.

Cisco cut 4,000 jobs while reporting record quarterly revenue. Stock rose 15% on surging AI orders.

GitLab announced sweeping restructuring to fund agent development. Cut headcount, flattened management, reorganized R&D into 60 smaller teams, and retired its CREDIT values framework.

GM laid off hundreds of IT workers and began hiring AI replacements. Explicitly seeking stronger AI skills.

Samsung faces a looming strike over AI. Global AI boom driving deep internal divisions between management and workers.

The thread: Revenue is up at all three companies. The functions going are IT operations, developer tooling management, and corporate overhead that was previously considered secure.

Grok Build, Claude Code, and Cursor all shipped agentic upgrades. LangChain shipped nine products to support them.

xAI launched Grok Build in beta. Terminal-native CLI with up to 8 parallel agents, Grok 4.3 beta, 2M token context. Priced at $299/month (introductory $99). SuperGrok Heavy only.

Claude Code limits increased 50%. Through July 13, on top of the doubling from the prior week. Plus separate Agent SDK credits.

Cursor shipped /orchestrate. Planner/worker/verifier loops that re-spawn on failure. Parallel subagents. Always-on CI agents.

LangChain shipped nine products at Interrupt 2026. SmithDB for agent traces, LLM Gateway for centralized control, Sandboxes GA for isolated testing, Deep Agents 0.6 for long-running workflows, and the Agent Development Lifecycle framework.

The thread: Grok Build at $299/month, Claude Code with separate SDK credits, Cursor as a standalone IDE. Three very different bets on how developers will pay for agentic coding. LangChain is betting the real money is in the infrastructure underneath all of them.

⭐ Featured: Thinking Machines built an AI that listens while it talks.

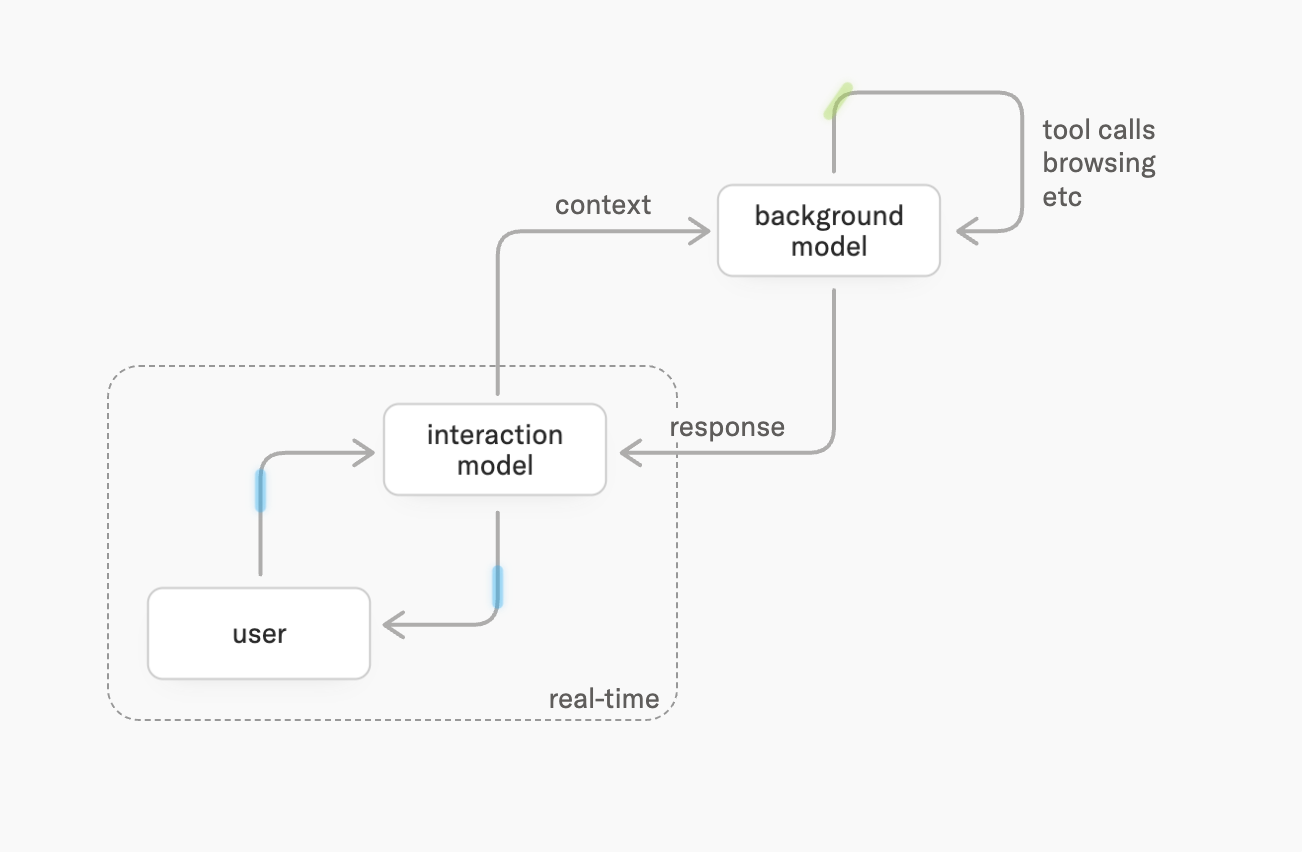

Every AI conversation today works the same way: you talk, the model waits, the model responds. Thinking Machines published research on “interaction models” that throw out that assumption entirely.

Their model processes continuous 200ms micro-turns of audio, video, and text simultaneously. There are no turn boundaries. The model listens while speaking, interrupts when it sees something wrong in your code, reacts to visual cues without being prompted, and runs background reasoning while maintaining the conversation.

The architecture splits into two parts: an interaction model that maintains real-time presence (always perceiving, always ready to respond), and a background model that handles deeper reasoning and tool use asynchronously. When the background model finishes a task, the interaction model weaves results into the conversation at an appropriate moment instead of interrupting.

The benchmarks are striking. On FD-bench (the standard interaction quality benchmark), their model scored 77.8 versus 46.8 for GPT-Realtime-2. On responsiveness, they hit 0.40 second turn-taking latency versus 1.18 for GPT-Realtime-2. They also created three new benchmarks (TimeSpeak, CueSpeak, visual proactivity) that no existing model can meaningfully perform. GPT-Realtime-2 scores near zero on all of them.

The model is a 276B parameter MoE with 12B active. It uses encoder-free early fusion, meaning no separate Whisper or TTS models. Audio comes in as raw dMel signals, video as 40x40 patches. Everything is co-trained from scratch.

Their argument comes from Rich Sutton’s “bitter lesson”: if interactivity is bolted on through harnesses (voice activity detection, turn-taking logic), it can never scale with intelligence. If it’s native to the model, scaling makes the model both smarter and a better collaborator.

What to watch for: This is a research preview from a startup (276B parameters, limited availability). But the design principle matters: current real-time systems from OpenAI and Google use harnesses to fake interactivity on top of turn-based models. Thinking Machines is arguing that’s a dead end. If they’re right, every voice agent shipping today is architecturally temporary.

🎙️ Worth a Listen

IBM AI Engineer Bri Kopecki on why agents without infrastructure are “brilliant goldfish.”

The problem: Most AI agents have no memory, no access control, no audit trail. Every conversation starts from scratch.

The six-layer stack: Scheduler (who goes first), memory manager (short/long/episodic), tool manager (sandboxed execution), identity manager (tokens and permissions), observability (full decision tracing), and guardrails/governance (human-in-the-loop for high-stakes decisions).

Why it matters now: This maps directly to what LangChain shipped this week (SmithDB for traces, LLM Gateway for access control, Sandboxes for tool isolation) and explains why Cursor, Anthropic, and OpenAI are all building orchestration layers.

Quick Hits

Cerebras IPO’d at $5.55B, shares jumped 89% on day one | TechCrunch — Near $100B market cap on debut. The AI chip premium is real.

Medicare created a payment model built for AI-assisted services | TechCrunch — The largest US payer quietly opened the door for clinical AI reimbursement. This will pull deployment faster than any product launch.

Musk v. Altman trial went to the jury | MIT Tech Review — Closing arguments accused Musk of selective amnesia and Altman of lying about the nonprofit mission.

ArXiv banned researchers for AI-generated papers | The Verge — Academic publishing’s authentication problem now has teeth, but detection is still losing the arms race.

Meta embedded AI in Threads and won’t let users block it | The Verge — Captive distribution at 3B+ users, no opt-out.

OpenAI Parameter Golf results: 1,000+ participants, agents everywhere | OpenAI — An ML challenge where the vast majority of submitters used coding agents. OpenAI built a Codex-based triage bot to handle the submission volume.

Claude Mythos cracked Apple’s M5 memory security in five days | Tom’s Hardware — First privilege escalation exploit on M5. Apple spent half a decade building Memory Integrity Enforcement. Standard user to root access.

Nvidia committed $40B in equity AI investments in 2026 | TechCrunch — Not just selling chips. Acquiring stakes in the companies that consume the most of them.

Anthropic published “2028: Two scenarios for global AI leadership” | Anthropic — A policy paper on US-China AI competition. Anthropic is writing geopolitics now.

YouTube expanding AI deepfake detection to all adult users | The Verge — The detection side is scaling up.

Google updated spam rules to include AI manipulation attempts | The Verge — SEO for the age of AI-generated content.