Another Weekly AI Newsletter: Issue 61

Anthropic gets blacklisted, OpenAI signs four deals in five days, agent observability emerges as a real concern, 🍌 2, healthcare AI shows ROI, and Geoffrey Hinton sits down with Neil deGrasse Tyson

Personal Note

This newsletter comes to you late this week on a Tuesday morning. Like many others, I was caught in the Anthropic outage and am also dependent on this technology to drive the initiatives that are meaningful to me. When I woke up to finalize the newsletter and found things offline, I journaled while listening to the birds outside, listened to music, reflected on my weekend, and engaged in refreshing activities I normally don’t find the time for. It was a lesson for me to find more time to step away from the keyboard.

The Week’s Thesis

AI went political this week: Anthropic’s relationship with the Department of War fell apart, and hours later, OpenAI signed a deal for classified network deployment. On paper, both companies claim the same red lines. But the sequence alone was enough to make people uneasy. More on this in our featured story below.

OpenAI’s partnership blitz: They launched Frontier Alliances, a new partner program, followed by a Codex integration with Figma bridging code and design workflows. By Friday, they announced a strategic partnership with Amazon and released a joint statement with Microsoft reaffirming their existing relationship. Four announcements in five days, all while the Department of War deal was making headlines.

Agent observability is becoming a thing: Microsoft found that 80% of Fortune 500 companies are running active agents but most lack visibility into what those agents are doing. LangChain argued that traditional APM tools weren’t built for this, New Relic shipped an agent-specific observability platform, and Google published a production-readiness guide. Observability is quietly becoming part of the conversation, and it’s worth paying attention to.

Healthcare AI is moving: NVIDIA’s annual survey found that 70% of healthcare organizations are now actively deploying AI, with 85% reporting increased revenue. Eli Lilly went live with LillyPod, the most powerful AI factory wholly owned by a pharmaceutical company, purpose-built for drug discovery. Oura shipped a proprietary AI model focused on women’s reproductive health, hosted entirely on their own infrastructure. And NIST published guidance on AI trustworthiness standards for clinical settings. From drug discovery to consumer wearables to regulation, healthcare AI is moving.

Quick Hits

Jira’s latest update allows AI agents and humans to work side by side | TechCrunch — Agents on the same sprint board as humans with deadlines and assignments. This is mainstream adoption.

Pro-level image generation gets faster and more accessible with Nano Banana 2 | Google Cloud AI — Google’s enterprise image gen model gets faster and cheaper. The gap between “good enough” and “production-ready” keeps shrinking.

Anthropic acquires Vercept to advance Claude’s computer use capabilities | Anthropic — Anthropic is doubling down on computer use. If agents are going to operate in production, they need to see and interact with real interfaces.

Detecting and preventing distillation attacks | Anthropic — Anthropic identified industrial-scale distillation campaigns by DeepSeek, Moonshot, and MiniMax, totaling over 16 million exchanges across 24,000 fraudulent accounts designed to extract Claude’s capabilities. They published their approach to catching and preventing it.

The human work behind humanoid robots is being hidden | MIT Technology Review — The humans still doing the work that robot demos suggest is automated. A good reality check.

Featured Story: Anthropic’s Deal With the Department of War Fell Through. Hours Later, OpenAI Signed One.

Anthropic published its Responsible Scaling Policy v3.0 on February 24, a ground-up rewrite of the framework it uses to decide what it will and won’t build. Two days later, Dario Amodei published a statement revealing that Anthropic has been deeply embedded in the Department of War for months: intelligence analysis, cyber operations, modeling and simulation. The company also disclosed it walked away from several hundred million dollars in revenue by cutting off entities linked to the Chinese Communist Party. But Anthropic drew two red lines: no mass domestic surveillance of Americans, and no fully autonomous weapons.

On February 27, Secretary of War Pete Hegseth designated Anthropic a “supply chain risk”, a label historically reserved for US adversaries. Trump ordered every federal agency to stop using Anthropic technology. That same night, OpenAI announced a deal to deploy its models on the Department of War’s classified network.

Here’s where it gets interesting: OpenAI’s stated terms include the same two red lines. No mass surveillance. No autonomous weapons. But OpenAI walked away with a deal and Anthropic walked away blacklisted. OpenAI’s approach centers on what Altman called a “safety stack”: cloud-only deployment that keeps OpenAI’s safety layers active, cleared personnel in the loop, and an agreement that if the model refuses a task, the government won’t force a workaround. What exactly differed in the negotiations isn’t public, but the outcome speaks for itself.

The RSP v3.0 explains the philosophical scaffolding behind Anthropic’s position. After two and a half years of trying to implement capability-based safety thresholds, Anthropic concluded that “the science of model evaluation isn’t well-developed enough to provide dispositive answers.” The policy now splits commitments into what Anthropic will enforce unilaterally and what requires industry-wide coordination. Autonomous weapons fall squarely in the second bucket: the reliability isn’t there yet, and no single company can build the guardrails alone.

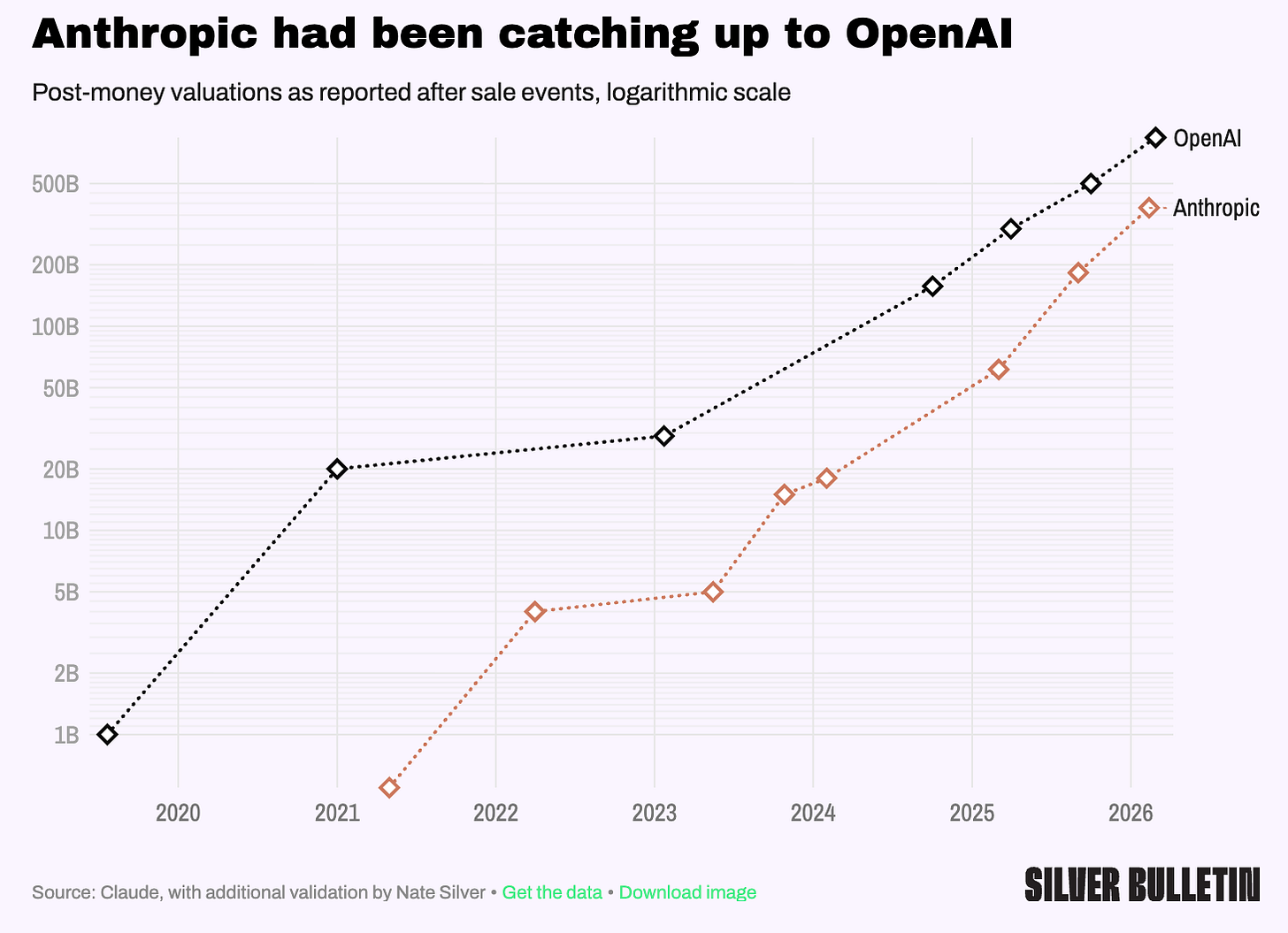

The business implications are already visible. Nate Silver noted that Anthropic had been steadily closing the valuation gap with OpenAI. Whether the DoW designation slows that trajectory is an open question.

The question practitioners should be sitting with isn’t “who’s right.” It’s what happens next. If you’re building on Claude for sensitive workloads, your platform just got blacklisted from every federal system. If you’re building on OpenAI, your platform’s safety guarantees rest on a technical architecture rather than a legal commitment. Both carry risk. The difference is in which failure mode you’re betting on.

What to watch for: Whether the “supply chain risk” designation survives legal challenge, and whether OpenAI’s cloud-only safety stack holds as models get more capable and the Department of War pushes for edge deployment.

Watch This

StarTalk: Geoffrey Hinton on AI, Consciousness, and the Future: Neil deGrasse Tyson sits down with Nobel Laureate Geoffrey Hinton to cover the full arc: how neural nets work, why backpropagation was the breakthrough, whether AI can actually reason, and the heavy questions around consciousness, energy demands, and what happens when models start generating their own training data.

Also This Week

Intrinsic joins Google | TechCrunch

Let Gemini handle your multi-step daily tasks on Android | Google AI Blog

Anthropic Education Report: The AI Fluency Index | Anthropic Research

The persona selection model | Anthropic Research

Disrupting malicious uses of AI | OpenAI

Can Local AI Stand In for the Cloud? | deeplearning.ai

AI is rewiring how the world’s best Go players think | MIT Technology Review

What I’m Watching

OpenAI’s new role in government AI. How does OpenAI’s solidified position with the Department of War shift the tide of AI in government? Will it be relatively quiet, or will we see noticeable shifts in how these technologies are deployed domestically and how we engage in combat with other countries? And if growth and innovation eventually push against the boundaries of an agreement, does the government override, or does OpenAI become more malleable?

The enterprise agent framework race. We are still in the “release agents as a capability” phase. Most enterprise platforms are now shipping their own proprietary frameworks. Will those be expansive enough to meet the breadth of platform use cases, or will we see demand expand beyond what a single-platform framework can handle, requiring true enterprise solutions?

Agent observability, from experience. Observability is something we are hyper-focused on at Ping. We find that we have the highest amount of control with our custom agents, and that control reduces significantly when we adopt out-of-the-box frameworks that leave us with little say over design practices. If that’s true at our scale, it’s worth asking what it looks like at enterprise scale.