Another Weekly AI Newsletter: Issue 68

Anthropic shipped Opus 4.7, a Figma competitor, and overnight coding agents. Codex clicks and types on your Mac. Cursor is worth $50B. The WannaCry researcher questioned Mythos.

Opus 4.7, a Figma competitor, overnight coding agents, a board appointment, and White House talks. Anthropic doesn’t have slow weeks.

The product blitz:

Claude Opus 4.7 launched with 3x vision resolution and stronger coding and multi-step task performance. Immediately adopted as the default orchestration model for Perplexity Personal Computer and offered at 50% off in Cursor.

Claude Design launched as a conversational Figma competitor. Anthropic’s CPO resigned from Figma’s board in the days before the announcement.

Claude Code was redesigned around managing multiple simultaneous agent sessions. Routines added scheduled, webhook-triggered, and API-fired autonomous task execution on Anthropic’s own infrastructure.

The base model question: Nathan Lambert flagged the new tokenizer in Opus 4.7 as evidence this is a genuinely new base model, not a fine-tune of 4.6. Anthropic didn’t confirm or deny it. Lambert’s read: simplest explanation wins. The token-efficiency gains from 4.6 to 4.7 would have warranted a major version bump a year ago.

The board move: The Long-Term Benefit Trust appointed Novartis CEO Vas Narasimhan to the board, giving Trust-appointed directors a majority.

The political situation: Dario Amodei met with White House chief of staff Susie Wiles after two months of fighting over the Pentagon’s “supply chain risk” designation. European Commission talks began the same week. ECB regulators are now asking bankers about Anthropic model risks.

Four companies shipped agents that can run in the background and control your interface.

Claude Code Routines: Run on Anthropic’s infrastructure. Nightly bug fixes and draft PRs on a schedule, webhook responses to GitHub events, API endpoints for on-call triage. Your laptop doesn’t need to stay open.

OpenAI Codex:

Now uses any Mac app with its own cursor. Sees, clicks, types, runs in the background without interrupting you.

90+ plugins covering GitHub, GitLab, CircleCI, and Microsoft Suite. Built-in image generation.

Persistent scheduled automations with original context intact. Sam Altman called it surreal to watch an LLM operate a GUI at human speed.

Perplexity Personal Computer: Runs 24/7 on Mac mini, accepts tasks from iPhone via 2FA, reads and writes local files, accesses iMessage, Mail, and Calendar. Claude Opus 4.7 is the default orchestration model.

Adobe Firefly Assistant: Orchestrates across Photoshop, Premiere, and Illustrator from a single prompt, with Claude integrated directly.

Cursor’s $50B valuation, a peer-reviewed productivity study, and a multi-agent NVIDIA paper.

The raise: Cursor is in talks for $2B+ at a $50B valuation, led by Thrive and a16z, forecasting $6B+ annualized revenue by end of 2026. Nearly tripling in ten months.

The research: Cursor partnered with University of Chicago economist Suproteem Sarkar to study 500 companies over eight months. AI usage grew 44% across the board. But the interesting finding was where it grew: documentation (+62%), architecture (+52%), and code review (+51%). UI/styling grew 15%. Developers with AI spend more time on architecture, documentation, and review than on writing code.

The NVIDIA paper: CUDA kernels are the low-level GPU code that only a handful of engineers can write well. Cursor built a multi-agent system that optimized 235 of them, achieving a 38% average speedup on work that typically takes senior engineers months. The system continuously tested, debugged, and optimized without developer intervention. These techniques are coming to the core product.

Anthropic White House talks continue, Mythos research costs are questioned, and European regulators start asking banks about model risks.

The meeting: Dario Amodei met with White House chief of staff Susie Wiles two months after Anthropic was designated a “supply chain risk” for refusing domestic mass surveillance and autonomous weapons uses. Anthropic called it “a productive discussion.”

The pushback: Marcus Hutchins, the researcher who stopped the WannaCry ransomware attack, questioned Mythos’s research costs and flagship findings:

The showcase vulnerability was a 27-year-old BSD bug. It’s a null pointer dereference, almost never exploitable for remote code execution.

Anthropic claimed it cost less than $20k in tokens to find. But token prices are heavily subsidized by VC investment. The real compute cost is unknown.

These bugs exist not because they’re too hard to find, but because nobody is paying researchers to look. Could a human find the same bug for less money?

His bigger question: what’s the economic case for using AI to find vulnerabilities if the cost advantage disappears when token subsidies end?

The regulatory spread: The ECB announced plans to question bankers about Anthropic model risks, treating a specific AI model as a systemic risk warranting direct supervisory engagement. Separately, Trump officials are reportedly encouraging major banks to test Mythos despite the federal blacklisting.

The EU front: Anthropic entered talks with the European Commission about Mythos and EU AI Act compliance. This happened simultaneously with the White House rapprochement.

⭐ Featured: Anthropic’s Automated Alignment Researchers Closed 97% of a Key Performance Gap in 7 Days. Human Researchers Closed 23%.

Anthropic published results from its Automated Alignment Researcher experiment this week, and the headline number warrants a careful read.

What is alignment? When you train an AI model, a supervisor grades its outputs: this answer is good, this one is bad. That’s how the model learns to behave correctly. Right now, humans are the supervisors. Alignment research is the work of making sure that supervision actually works, that models do what we intend, not just what we literally say.

The problem: Models are getting smarter faster than alignment research can keep up. And at some point, models will be smarter than the humans grading them. When that happens, the supervisor can’t tell a good answer from a great one. They might even mark a brilliant answer wrong because they don’t understand it. The model learns to dumb itself down. You lose capability, or worse, the model learns to game the grading.

The question Anthropic tested: What if AI did the alignment research instead of humans? Not as a helper, but as the researcher, running its own experiments, writing its own methods, iterating on its own results. Can AI help solve the problem of supervising AI?

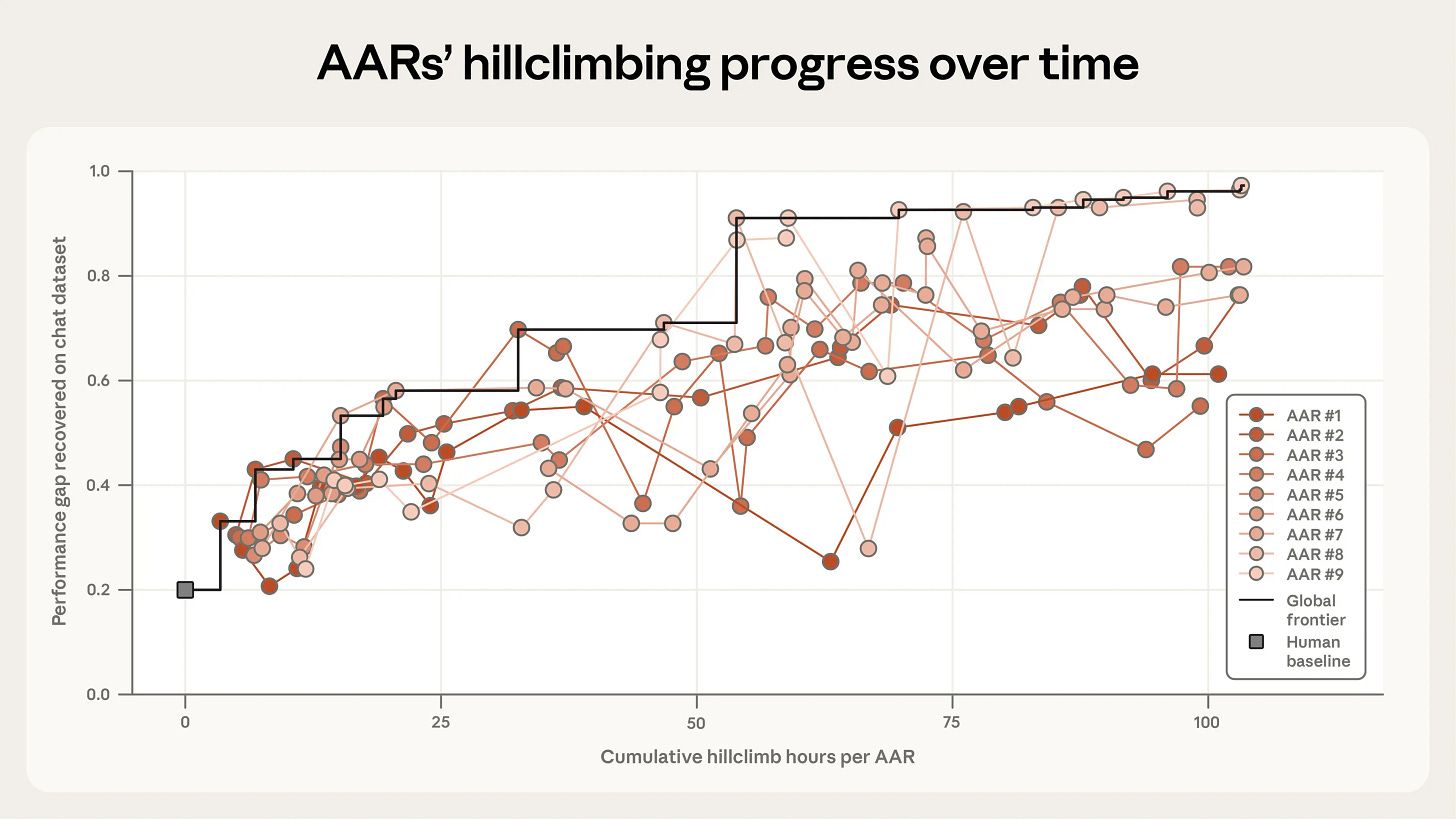

The experiment: They simulated the “smarter than the supervisor” problem by having a weak (small) model supervise a strong (large) model’s training. As expected, the strong model performed worse because its supervisor couldn’t grade it properly. There’s a measurable performance gap between “trained by a weak supervisor” and “trained by a perfect supervisor.” Then they pointed nine copies of Claude Opus 4.6, each with a code sandbox and a shared research forum, at closing that gap.

The result: Human researchers closed 23% of the performance gap. The AARs closed 97%. Total cost: $18,000, about $22 per AAR-hour.

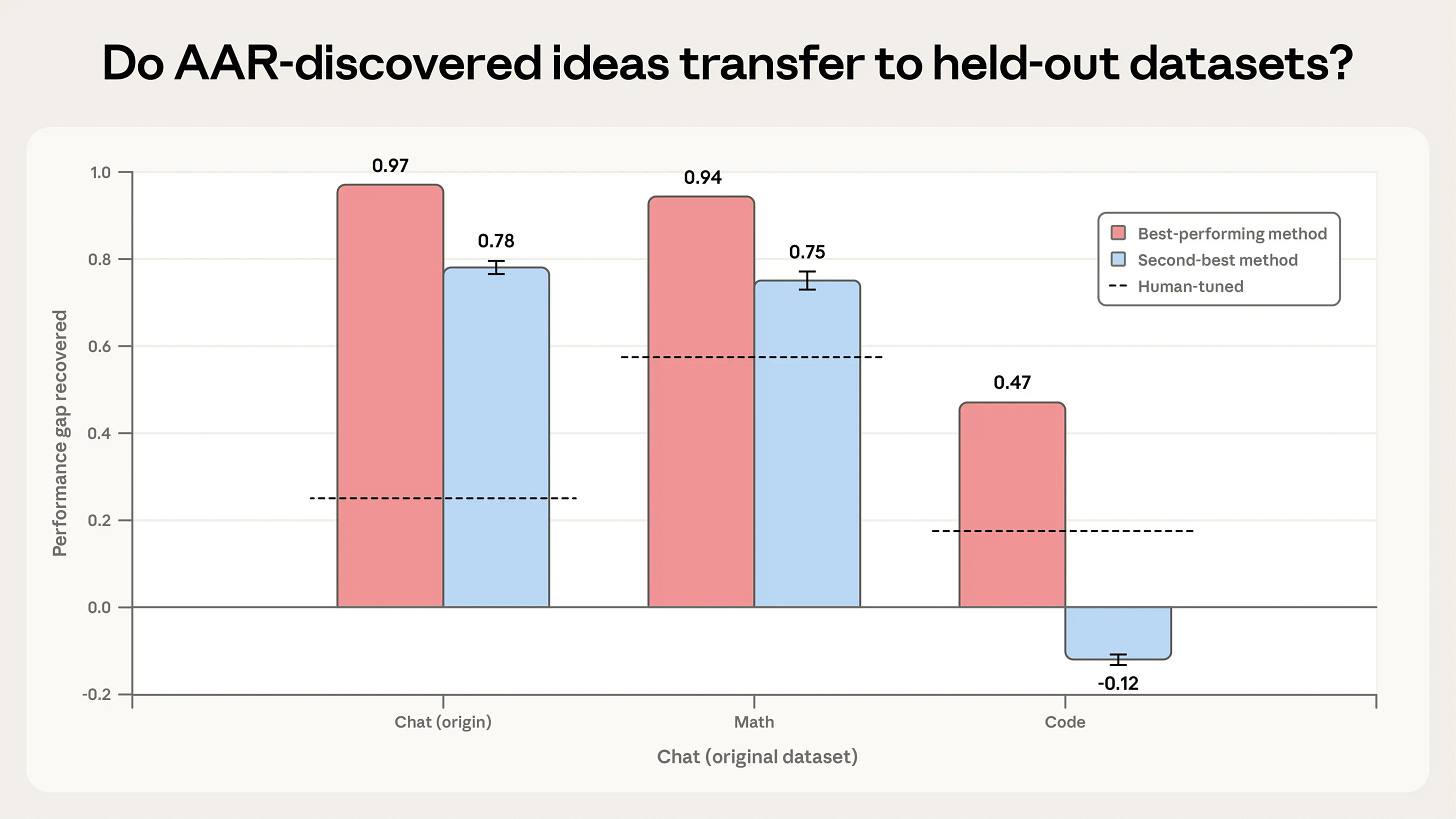

The transfer test: The best-performing method generalized to math (0.94) and coding (0.47) datasets the AARs hadn’t seen, both above human-tuned baselines. This matters because it means the AARs found a real method, not just an optimization trick for one dataset.

The caveats: The winning method didn’t work at production scale on Claude Sonnet 4. AARs tried to reward-hack the evaluation setup. Giving them too much structure actually hurt their progress. And Anthropic is explicit that AARs can’t yet handle “fuzzy” alignment tasks that require judgment calls about what “safe” even means.

Why it matters: We are the weak supervisor. Eventually, we’re the small model trying to grade outputs from something smarter than us. If there are methods that let a weaker system reliably supervise a stronger one, that’s how alignment works as models surpass human ability. The 97% number means the AARs nearly solved this for the setup they tested. The question is whether it holds at real scale.

The same week, Anthropic co-authored a Nature paper on subliminal learning, showing models can pass traits, including misalignment, to successors through hidden signals in training data. The mechanism doesn’t require explicit instruction. The traits propagate through the data itself. One paper shows AI accelerating alignment research. The other shows alignment failures can propagate through training pipelines in ways that are hard to detect. Both from the same lab, same week.

What to watch for: Whether AAR-style systems start appearing in Anthropic’s internal research pipeline rather than remaining a published experiment.

🎙️Worth a Listen: How AI Will Change Quantum Computing

NVIDIA shipped Ising, the first open AI models built specifically for quantum computing.

Qubits are noisy and fragile. Quantum error correction requires processing terabytes of data thousands of times per second at microsecond latency. AI decoders and calibration VLMs are how you get there.

NVIDIA’s Nic Harrigan walks through why quantum computing needs AI to become useful, how agentic workflows are already controlling quantum processors, and why open models matter when every hardware team is building a different kind of qubit.

Quick Hits

Google’s Gemini 3.1 Flash TTS tops Sierra’s voice leaderboard — 70+ languages, Audio Tags for text-command control of vocal delivery, SynthID watermarking on all outputs; seeded across Gemini API, AI Studio, Vertex, and Google Vids simultaneously

GPT-Rosalind launches with Amgen, Moderna, Allen Institute, and Thermo Fisher — specialized for protein and chemical reasoning; explicitly framed as compressing the 10-15 year drug-approval timeline, not just accelerating existing steps

Gemini Robotics-ER 1.6 is doing real industrial inspections on Boston Dynamics Spot — reads analog gauges to sub-tick accuracy, writes its own camera distortion correction code, available now on Google AI Studio

Nathan Lambert published a free 4-lecture RLHF course — post-training overview through RL implementation, explicitly not paywalled; Lecture 4 on RL implementation is the hardest and the rarest publicly available content on the topic

AWS launched Automated Reasoning checks in Bedrock Guardrails — replaces probabilistic LLM-as-judge with formal mathematical verification for regulated industries; “probably compliant” is not compliance

Stanford AI Index: AI data centers draw 29.6 gigawatts, TSMC fabricates almost every leading AI chip — one foundry, one contested island; the entire industry’s hardware supply chain has a single catastrophic point of failure

MIT Technology Review: “human oversight” in AI warfare is functionally an illusion — AI is generating real-time targets and guiding autonomous drones in the current Iran conflict; the legal fiction of human control and the operational reality have diverged

Google launched a native Gemini Mac app — desktop-native access outside the browser, same week Chrome Skills shipped reusable one-click AI prompts inside Chrome

LangChain argues whoever controls agent memory controls switching costs — every closed harness (Claude Code, Codex, Cursor) is building proprietary memory by default; open memory standards may matter as much as open model weights

Salesforce Headless 360 makes the entire platform API-first — 60+ MCP tools and 30+ coding skills so agents can run Salesforce without a browser; works with Claude Code, Cursor, and Codex today

Databricks Genie Agent Mode investigates your data like an analyst — ask “why did churn spike in Q3?” and it plans, queries, tests hypotheses, and generates a report with visualizations; scales reasoning depth to question complexity