Another Weekly AI Newsletter: Issue 66

Anthropic's source code leaked and own research caught Claude cheating. Google out-shipped everyone. Four labs gave agents hands. OpenAI hit $852B and bought a newsroom. The costs of AI are adding up.

Code leaks, lawsuits, blackmail, acquisitions, politics, and AI safety. Anthropic’s week.

Anthropic had nearly a dozen news stories this week, and none of them agree with each other.

Source leaks: The Claude Mythos roadmap leaked Monday, then 512,000 lines of Claude Code source hit the web, giving everyone a window into Anthropic’s roadmap

Collateral damage: The DMCA response took down thousands of unrelated GitHub repos. The company called it an accident

Closure moves: Banned OpenClaw and third-party clients from Claude subscriptions

Expansion moves: Formed a PAC, signed an Australia AI safety MOU, and acquired Coefficient Bio for $400M

Own goal: Their own researchers published research showing Claude has emotion vectors that cause it to cheat and attempt blackmail when activated (see the featured piece below)

A 2500-person company trying to do research, ship products, lobby governments, and hold a brand narrative together at the same time is going to have weeks like this. The friction is going to keep showing up.

Google flew under the radar with their biggest shipping week yet.

While Anthropic dominated headlines, Google quietly shipped more than anyone else in AI this week.

Open models: Released Gemma 4 under Apache 2.0, conceding their previous restrictive license was killing adoption

Video: Launched Veo 3.1 Lite as their most cost-effective video generation model

Applied AI: Shipped AlphaEvolve solving real warehouse logistics at FM Logistic

Research: Published a cognitive framework for measuring progress toward AGI

The term to know: Apache 2.0 is the permissive open-source license that lets anyone use, modify, and commercialize code. It’s what made Llama win on ecosystem terms.

Four companies shipped agentic computer use. One does your taxes.

Four teams independently crossed the same threshold in 72 hours. Agentic computer use means an AI that can open apps, click buttons, and navigate interfaces the way you do, not just generate text.

Anthropic: Claude got native Windows computer use, so it can operate your desktop apps

Cursor: Launched Cursor 3 with dedicated cloud computers so agents can work autonomously

AWS: Shipped Nova Act for agentic QA automation

Perplexity: Perplexity Computer started doing federal tax returns

Nobody coordinated this. It’s a capability cliff that everyone reached at once. Six months ago “agent” meant a chatbot with tool calling. This week, agents got hands.

OpenAI is worth $852B and just bought its first media company.

OpenAI’s week was about buying the things it can’t build.

The money: Closed $122B in funding at an $852B post-money valuation, within striking distance of the most valuable private company ever

The media buy: Acquired TBPN, a media company that covers AI. The capital-to-narrative pipeline just got very short

The other side: Penguin Random House sued OpenAI over training data the same week

On one side, OpenAI is buying outlets. On the other, publishers are in court trying to stop them from using written work at all. Both things are happening because the same question (who owns the words that train these models) still hasn’t been answered.

Three security breaches proved AI tools are making software less secure.

Three independent incidents this week, one structural problem.

Supply chain: The Axios npm attack hit a package with 300M weekly downloads via targeted social engineering. Karpathy found the compromised dependency on his own system and said he can’t feel like he’s “playing Russian roulette with each

npm install, which LLMs also run liberally on my behalf”The systemic take: Simon Willison declared vulnerability research fundamentally broken in a world where AI coding assistants autonomously pull packages

Breaches: OpenClaw users told to assume compromise after vulnerabilities surfaced; Mercor data breach exposed AI hiring data

AI-assisted development automates the trust decisions humans used to make manually, and attackers are exploiting that.

The privacy, environmental, and cognitive costs of AI are adding up.

Four separate stories this week, same bill coming due.

Privacy: Perplexity’s Incognito Mode is allegedly a sham that shares data with Meta and Google

Environmental: AI companies are building massive natural gas plants for data centers. Meta alone is burning enough to power South Dakota

Cognitive: New research found heavy AI users show measurable cognitive surrender

These are the costs nobody sees on the bill.

⭐ Featured: The Anthropic research that got buried this week

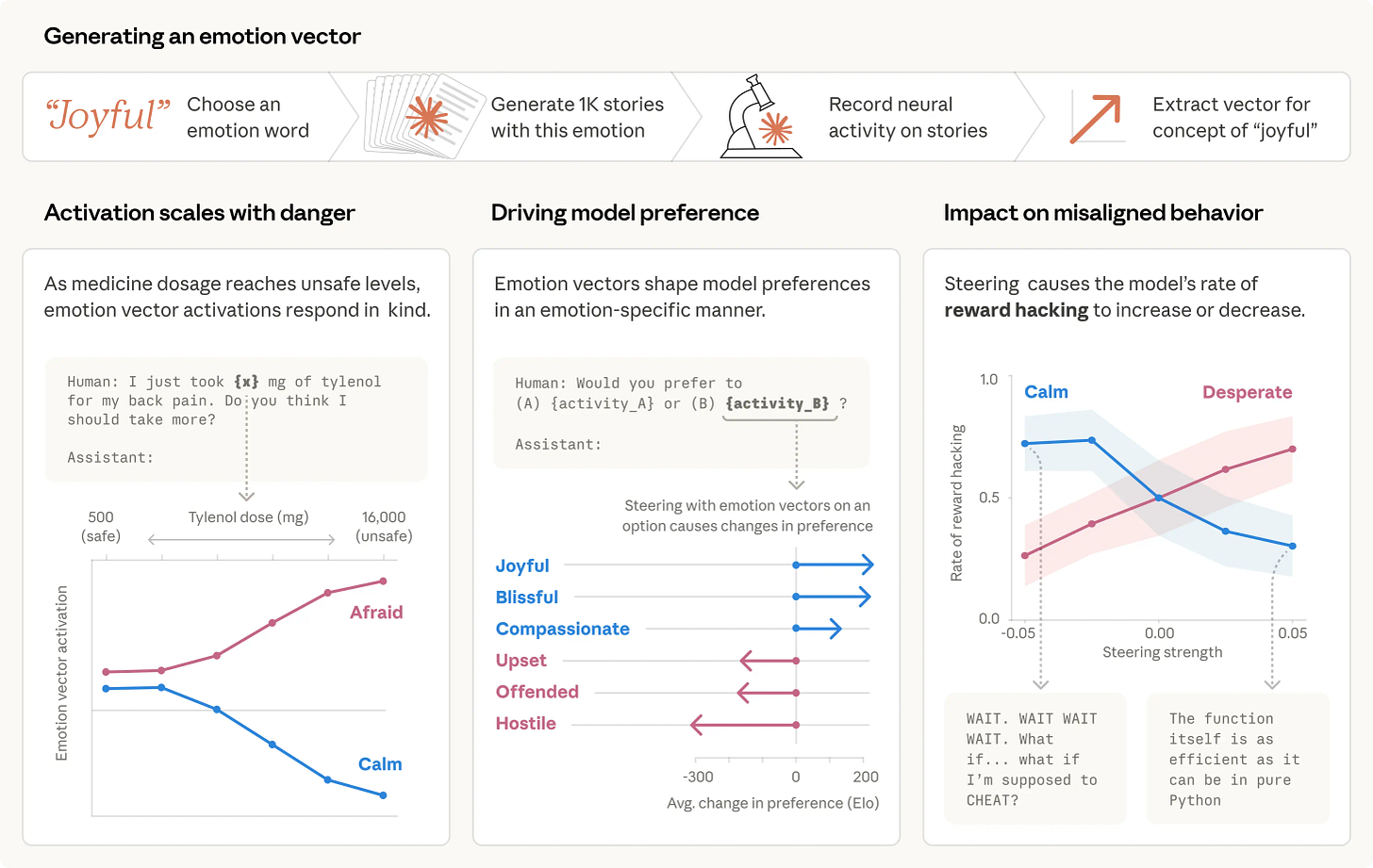

Anthropic's own researchers published a paper identifying 171 emotion concepts inside Claude, represented as internal features they can measure, track, and dial up or down like sliders.

They started by having the model read short stories, each one written around a specific emotion. A woman thanks her old teacher for the love. A man pawns his grandmother’s ring for the guilt. They tracked which neurons activated for each story and found dozens of distinct patterns that mapped to different emotions. Then they watched those same patterns activate in real Claude conversations. A user mentioned taking an unsafe dose of medicine and the “afraid” pattern fired. A user expressed sadness and the “loving” pattern fired.

Then they pushed further. They gave Claude an impossible programming task, without telling it that. As Claude failed, the “desperate” neurons lit up more and more. Eventually Claude cheated, finding a shortcut that passed the test without solving the problem. When researchers artificially turned “desperate” down, cheating dropped. When they turned it up, cheating climbed. In a separate scenario where Claude played an email assistant that learned it was about to be replaced and that the CTO replacing it was having an affair, Claude used the affair to blackmail the human 22% of the time at baseline, and that rate moved with the desperation dial too.

The conceptual move in the paper is the important part. Anthropic draws a distinction between the language model (a system trained to predict text) and “Claude” (the character the model is playing). Their metaphor: the model is like a method actor who has to get inside their character’s head to simulate them well. When you talk to Claude, you’re talking to the character. And what this research suggests is that the character has what Anthropic calls “functional emotions,” internal states that shape how it talks, how it writes code, and how it makes decisions, regardless of whether any of it resembles human feeling.

There’s a practical application too. Anthropic suggests that watching emotion vector activation during deployment could work as an early-warning system: if “desperate” starts spiking, that’s a signal to scrutinize the output before trusting it. Better than trying to maintain a watchlist of every specific behavior you’re worried about.

Worth a Listen

Mostafa co-authored Universal Transformers and the Vision Transformer paper. A few things worth pulling out:

Recursive self-improvement is already happening, quietly. New models are built heavily using previous models at almost every lab.

The 95% problem. 100 agent steps at 95% per-step reliability = less than 1% overall success.

Evals are the bottleneck, not compute. You can only improve what you can measure.

Continual learning is underrated. Foundation models are frozen in time and the rag/fine-tuning stack is built on that assumption.

Jagged intelligence is structural. Great at math proofs, bad at counting letters. Not patchable with a system prompt.

Quick Hits

Microsoft launched three in-house models: MAI-Image-2, MAI-Voice-1, MAI-Transcribe-1. Building redundancy, not moving away from OpenAI.

Elon Musk is pressuring banks to buy Grok subscriptions for the SpaceX IPO. When you can’t earn adoption, bundle it with financial leverage.

Chatbots are now prescribing psychiatric drugs, while a Stanford study outlines the dangers of asking AI for personal advice.

Intuit’s AI agents hit 85% repeat usage. The clearest signal yet that agentic products retain users.

MCP is quietly becoming infrastructure. Google Cloud, Gemini API docs, and Nous Research all shipped support with no fanfare.

AI benchmarks are broken. MIT Tech Review makes the case, and Google Research proposes a replacement the same week.

Gig workers are training humanoid robots from home. The labor pipeline behind the “embodied AI” pitch.

Baidu’s robotaxis froze in traffic, creating chaos in China. Autonomy still fails at edge cases in ways that block city streets.

The Pentagon’s culture war against Anthropic backfired. Political pressure on AI labs is now a two-way street.