Another Weekly AI Newsletter: Issue 60

Amazon and Google bet on MCP for enterprise agents, three frontier models drop in one week, Z.ai ships GLM-5 on Huawei chips and India has a big week in AI

The Week’s Thesis

MCP is finding its footing in the enterprise. Amazon made Quick an MCP client, letting partners expose capabilities as tools its agents can invoke. Google went in the other direction: managed MCP servers for AlloyDB, Spanner, Cloud SQL, Firestore, and Bigtable that give any MCP-compliant agent a standard interface to their data layer with no infrastructure to deploy. Both chose MCP as the contract. The promise is speed to information and action from a single interface, but how you measure that return is still an open question.

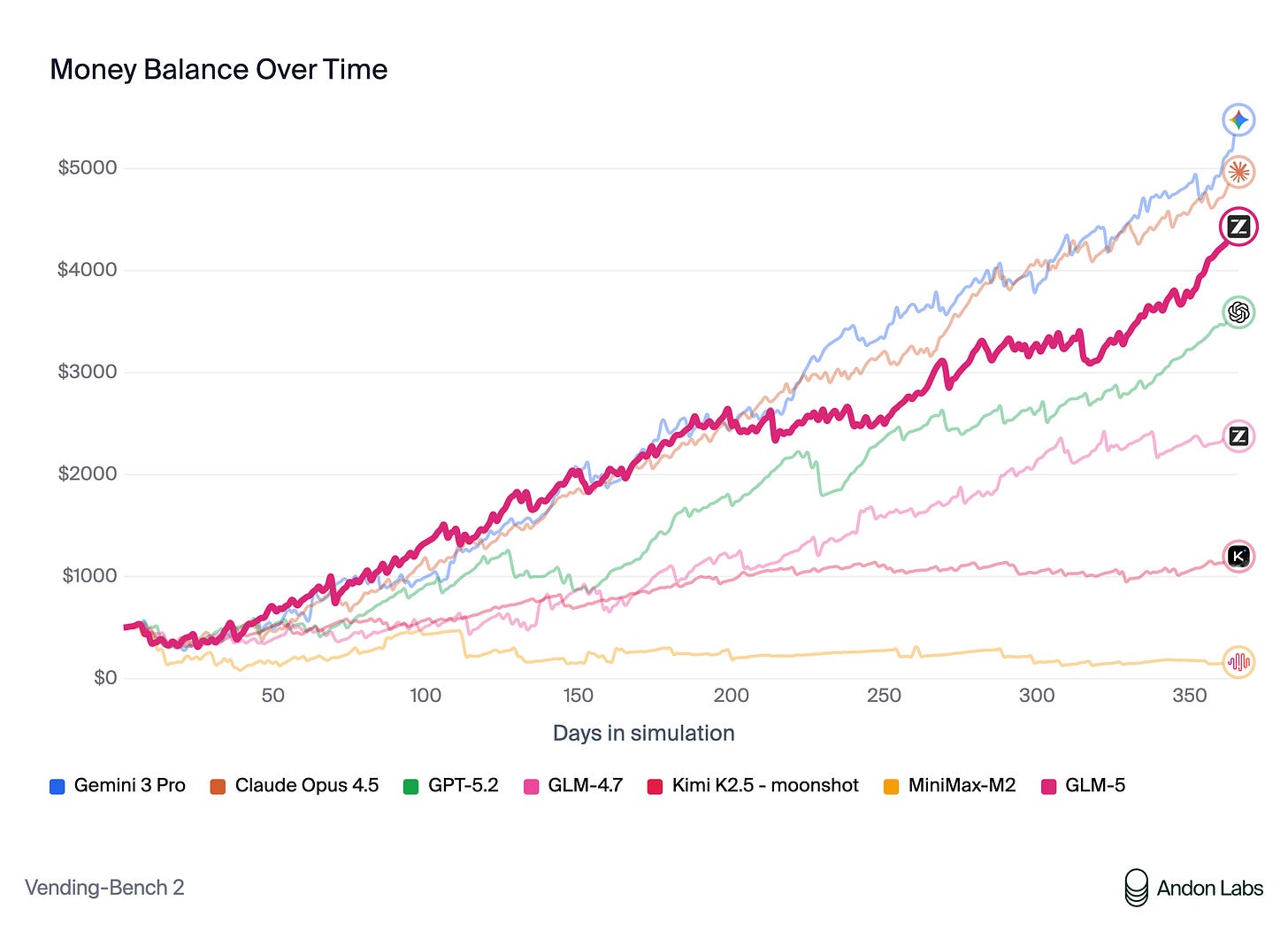

Three frontier models dropped this week, and the pricing gap between open and closed got harder to ignore. Sonnet 4.6, Gemini 3.1 Pro, and GLM-5 all posted competitive benchmarks. On OpenRouter, GLM-5 runs at $0.95/$2.55 per million input/output tokens versus Sonnet at $3/$15 and Gemini at $2/$12. For agentic workloads, those economics compound fast. The models are becoming table stakes; the differentiation is what surrounds them.

Agent autonomy is outrunning evaluation. Anthropic’s research shows Claude Code sessions running autonomously 2x longer than three months ago, with experienced users auto-approving 40%+ of sessions. Meanwhile, Amazon is internally grappling with evaluating thousands of agents and publishing a whole framework for it. And DeepMind is asking whether models even have genuine moral reasoning or are just pattern-matching on ethics. Deployment velocity is way ahead of our ability to assess what these agents are actually doing.

The global map is shifting too. OpenAI, Google, Microsoft, and Anthropic all showed up to the India AI Impact Summit this week with infrastructure commitments. OpenAI announced “OpenAI for India” focused on sovereign infrastructure and workforce upskilling. Microsoft pledged a multi-billion-dollar initiative to close the AI adoption gap. Anthropic opened a Bengaluru office. When every major lab converges on the same market in the same week, it tells you where the growth is.

Quick Hits

Here are the 17 US-based AI companies that have raised $100M or more in 2026 | TechCrunch — 17 mega-rounds in under two months. The capital is betting on infrastructure and vertical agents, not foundation models.

Anthropic and Infosys collaborate to build AI agents for telecommunications | Anthropic News — Regulated industries are where agents get real. Telecom compliance is messy enough to justify the investment.

KLong: Training LLM Agent for Extremely Long-horizon Tasks | arXiv — Agents that can hold context across hundreds of steps. The gap between “demo agent” and “production agent” starts here.

Unauthorized OpenAI Equity Transactions | openai.com — OpenAI had to publicly warn people about unauthorized equity offers. When your stock is hot enough to attract scams, that’s its own signal.

Towards Anytime-Valid Statistical Watermarking | arXiv — As agents generate more content autonomously, knowing what’s machine-made becomes an infrastructure problem, not a nice-to-have.

Anthropic and the Government of Rwanda sign MOU for AI in health and education | Anthropic News — A model for government-AI partnerships that starts with local context and capacity building, not top-down deployment.

Anthropic partners with CodePath to bring Claude to the US’s largest collegiate CS program | Anthropic News — The next generation of developers will learn to code with AI from day one. That changes what “junior engineer” means in three years.

Featured Article: GLM-5: China’s First Public AI Company Ships a Frontier Model

Z.ai (formerly Zhipu AI) released GLM-5 on February 11, a 744B-parameter mixture-of-experts model with 40B active parameters per token and a 200K context window. It’s the first open-weight model to hit 50 on Artificial Analysis’ Intelligence Index, and it’s released under an MIT license.

The benchmarks tell a competitive story. GLM-5 scored 77.8% on SWE-bench Verified, beating Gemini 3 Pro (76.2%) and trailing Claude Opus 4.5 (80.9%). On AIME 2026 it hit 92.7%, essentially matching Opus. On BrowseComp, it scored 62.0, nearly doubling Opus 4.5’s 37.0. It’s the #1 open-weight model on LMArena and #11 overall.

What makes this release structurally significant is what’s underneath it. GLM-5 was trained entirely on Huawei Ascend 910B chips using the MindSpore framework. Zhipu has been on the US Entity List since January 2025 with no access to NVIDIA H100s. A frontier-competitive model built without any Western compute hardware is a data point that changes the export control conversation.

The caveats are real. GLM-5 is text-only with no multimodal support. Independent testers have flagged questions about benchmark methodology and noted the model can be aggressive in task execution without strong situational awareness. Running it locally requires ~1.5TB of VRAM. But for the open-weight ecosystem, this is a milestone: frontier-class intelligence, MIT-licensed, at a fraction of closed-model pricing.

What to watch for: Whether independent evaluations hold up to the published benchmarks, and whether the Ascend-trained approach becomes a template for other Chinese labs navigating export controls.

Watch This

Brian walks through setting up a multi-agent team using OpenClaw, covering dedicated machines, access permissions, cost configurations, and API token optimization across different models.

Also This Week

Using Google Cloud AI to measure the physics of US freestyle snowboarding | Google Cloud AI

Evaluating Chain-of-Thought Reasoning through Reusability and Verifiability | arXiv

Gemini 3.1 Pro - Model Card | DeepMind

Robots and AI Are Working Together to Bring You Better Medicines | NIST

A message from our CEO, Sundar Pichai | Google AI Blog

What I’m Watching

OpenAI’s acqui-hire playbook. Steinberger built OpenClaw into the most-starred open-source agent project on GitHub in four months, and now he’s inside OpenAI building “the next generation of personal agents.” The project moves to a foundation, but the founder’s vision moves with him. If OpenAI keeps pulling in open-source agent talent, it signals a shift from model company to agent platform company.

The agent evaluation reckoning. Three separate organizations flagged the same problem this week: we’re deploying agents faster than we can evaluate them. Autonomy sessions are getting longer, tool access is getting broader via MCP, and the pricing is making it cheaper to scale. Something breaks publicly before the evaluation frameworks catch up. The teams building those frameworks now have a head start.