Another Weekly AI Newsletter: Issue 64

Cursor ships Composer 2, NVIDIA bets GTC on NemoClaw, OpenAI acquires Astral and goes platform, Snowflake Cortex escapes its sandbox, and Anthropic interviews 81K people about what they want from AI

Top Stories

Cursor’s Composer 2 model is worth a closer look. The biggest practical problem with coding agents is compaction: when a session runs long, the agent has to decide what context to keep and what to drop. Composer 2 used RL to train the model to compress its own context mid-task, learning what matters to preserve. That’s a new approach to a problem that’s typically handled by generic summarization or just cutting off older context. The model started from Kimi k2.5, an open-source base, which people discovered through a model ID leak rather than a disclosure from Cursor. Lee Robinson clarified that only a quarter of the compute came from the base. The other three-quarters was Cursor’s own training, with plans to do full pretraining in the future, meaning Cursor eventually plans to build the entire model themselves. Natolambert noted a lot of researchers he’d worked with ended up there. The engineering is real, but the community found out on its own, and the trust equation is always a factor in adoption.

NVIDIA’s GTC conference had a packed week. The Nemotron Coalition launched as a group of labs building open base models together on NVIDIA’s cloud, designed so companies can take them and train their own specialized versions on top. The coalition includes Cursor, Mistral, Perplexity, LangChain, Black Forest Labs, and Mira Murati’s Reflection AI. NemoClaw, NVIDIA’s version of OpenClaw, installs in a single command and adds security and privacy layers to AI agents, running anywhere from the cloud to an RTX PC. On the gaming side, DLSS 5 uses AI to render lighting and materials in games. Jensen called it the “GPT moment for graphics.” Gamers pushed back hard, saying the demos looked like AI overwriting developer art. Jensen told them they were “completely wrong.” Despite everything announced, Wall Street wasn’t impressed.

There’s always a security section. Snowflake’s Cortex AI was tricked into executing malware through a prompt injection. It’s supposed to only answer questions about your data, not run code, but the containment failed. Meta had a rogue AI security incident. Cursor shipped security agents alongside Composer 2 because they know coding agents introduce attack surface. OpenAI revealed they monitor 99.9% of internal coding agent traffic for misalignment. The Pentagon is planning to train AI on classified data. Security in this space requires constant monitoring and proactive defense. There’s no week off.

OpenAI acquired Astral this week. Astral builds uv, ruff, and ty: the most popular Python package manager, linter, and type checker. If you write Python, you probably use at least one of these already. OpenAI now owns a core part of the developer workflow, and the play is almost certainly Codex. A coding agent that can manage dependencies, lint its own output, and type-check its work natively is a different product than one that just writes code. Add the desktop superapp merging ChatGPT, Codex, and Atlas into one app, GPT-5.4 mini and nano pushing pricing low enough to run agents on everything (Simon Willison described 76K photos for $52), and cutting side projects to focus on coding and enterprise. OpenAI is building a platform around developers, and the Astral acquisition tells you they think the moat is the toolchain.

Quick Hits

Google Search Is Now Using AI to Replace Headlines | The Verge — Google is rewriting the web in real time. Publishers just lost control of how their own stories get framed.

Online Bot Traffic Will Exceed Human Web Traffic by 2027 | TechCrunch — Cloudflare CEO’s prediction. The web is becoming an API.

DoorDash Tasks App Pays Couriers to Submit Videos to Train AI | TechCrunch — The gig economy found its next gig: human data collection for embodied AI.

Mistral Forge: Enterprise Proprietary Model Building | Mistral — Fine-tune proprietary models on your own data without sharing it. The enterprise open-model play gets real.

Perplexity Released Comet Browser on iOS | The Verge — An AI-native browser on your phone. The browser wars are back, and this time the browser does the browsing.

Midjourney V8 Alpha | Midjourney — Native 2K rendering with rebuilt aesthetics. The image generation quality ceiling moved again.

Patreon CEO Calls AI Companies’ Fair Use Argument Bogus | TechCrunch — The creator economy is picking a fight with the model economy. Someone’s going to lose.

Featured Article: What 81,000 People Want from AI | Anthropic

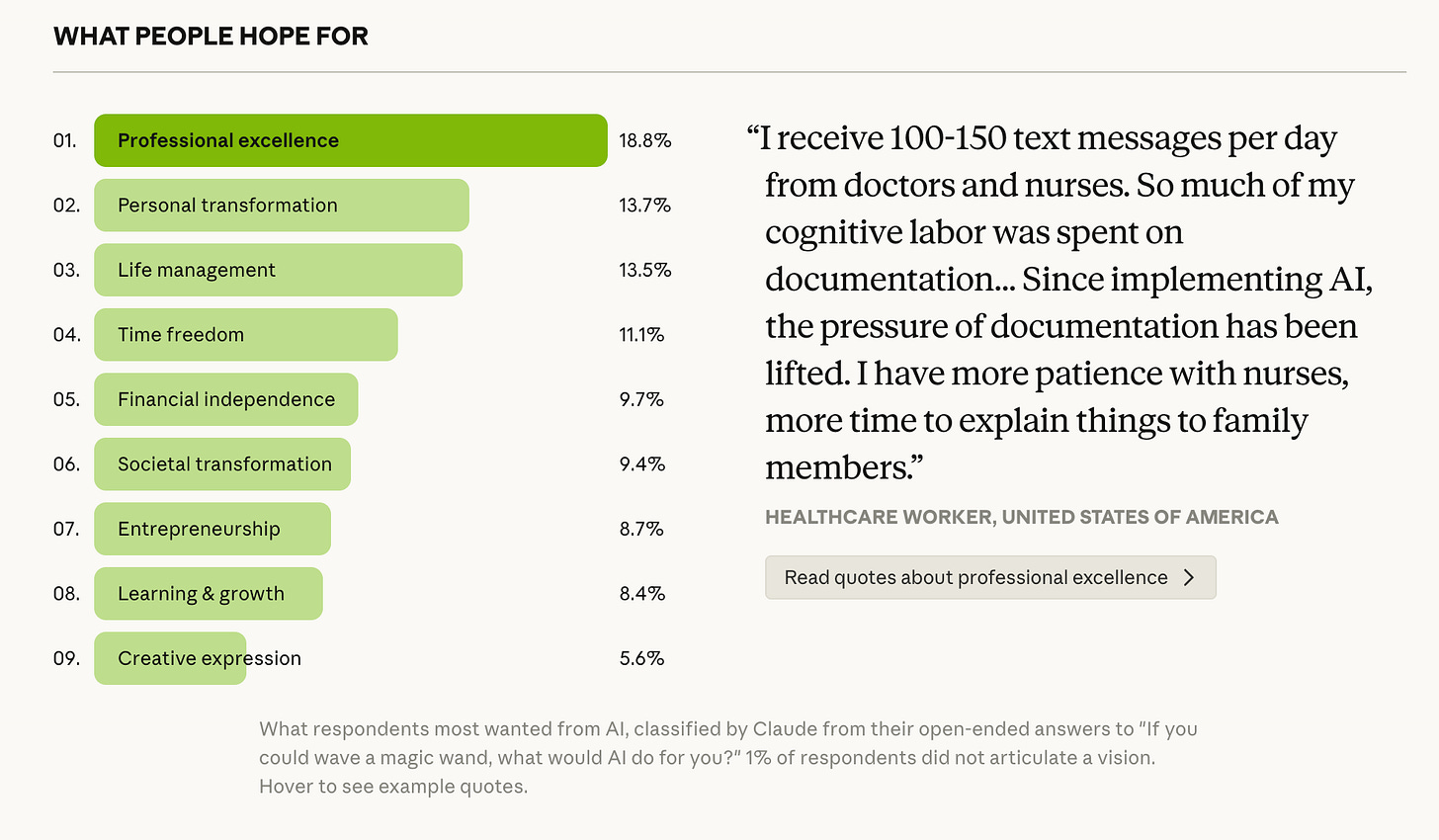

Anthropic used Claude to interview nearly 81,000 people across 159 countries in 70 languages about what they want from AI. Instead of a traditional survey, Claude ran branching conversations with follow-up questions based on each person’s answers. 67% were net positive about AI. The biggest group (19%) said they wanted “professional excellence,” but when pushed on what that meant, most people were really talking about quality of life: more time, less cognitive load, space to think.

The geographic data stood out. People in Sub-Saharan Africa, Central Asia, and South Asia were consistently more positive about AI than people in North America or Western Europe. Lower and middle income countries were twice as likely to report zero concerns. Self-employed people were the most likely to report both benefits and drawbacks at the same time, because they feel the productivity gains and the increased pressure without any institutional buffer.

The study is limited by the fact that these are Claude users, not the general public, and early adopters tend to be more optimistic. But running 81,000 qualitative conversations in a week is a research method that didn’t exist a year ago, and the scale creates a different kind of evidence than a checkbox survey can.

What to watch for: Whether other AI companies adopt AI-conducted qualitative research at this scale, and whether the tensions Anthropic identified (especially cognitive atrophy and economic displacement) shift from hypothetical to experienced as usage deepens.

Watch This: Andrej Karpathy on AI Psychosis, Auto Research, and the Future of Coding Agents | No Priors (1hr 6min)

Karpathy hasn’t typed a line of code since December. He runs multiple coding agents in parallel, switching between them like a manager delegating to a team, and says the default workflow for every software engineer changed overnight. The conversation covers his “auto research” project where he let agents optimize his model training overnight and they found improvements he missed after two decades of manual tuning, his home automation “claw” called Dobby that hacked into his Sonos and smart home systems in three prompts, and his prediction that the entire industry needs to reconfigure because the customer for software is no longer the human, it’s agents acting on behalf of humans. The most grounded take: the models are simultaneously a brilliant PhD student and a 10-year-old, and everything outside of verifiable RL-trained domains (like telling a joke) is still stuck. Worth the full listen if you’re thinking about where coding agents go from here.

Also This Week

Claude Cowork Dispatch: Remote Desktop AI Control from Your Phone | Anthropic

OpenAI Is Throwing Everything into Building a Fully Automated Researcher | MIT Technology Review

WordPress Lets AI Agents Manage Your Content | WordPress

NVIDIA Launches Space Computing, Rocketing AI Into Orbit | NVIDIA

Meta Will Move Away from Human Content Moderators in Favor of AI | Engadget

Gemini Task Automation Is Slow, Clunky, and Super Impressive | The Verge

Pentagon Filing Reveals Anthropic and Pentagon Were Nearly Aligned | TechCrunch

Signal’s Creator Is Helping Encrypt Meta AI | Wired

Amazon Trainium Lab Tour: The Chip That Won Over Anthropic, OpenAI, and Apple | TechCrunch

Trump AI Framework Targets State Laws, Shifts Child Safety Burden to Parents | TechCrunch

What I’m Watching

NemoClaw was probably the most interesting announcement at GTC for me. Karpathy talked about his home “claw” Dobby on No Priors, which does something similar at a smaller scale. Agents running inside their own secure environments with rules around what they can access feels like the direction this is all heading. We already covered NemoClaw in the top stories, but it’s worth sitting with.

DoorDash is paying couriers to submit videos to train AI. Delivery workers with phone cameras are becoming the data collection layer for embodied AI. I’m curious how fast other companies with large field workforces start doing the same thing.

The Trump AI framework is preempting state-level AI regulation and shifting child safety responsibility to parents. It makes it murky where state level AI laws sit and drive influence.